RLHF vs Direct Preference Optimization: What Changes for Practitioners?

Many of us started with supervised fine tuning. Then came RLHF, reinforcement learning from human feedback, which became the dominant paradigm for aligning large language models. Now, there is growing interest in alternatives such as Direct Preference Optimization, often abbreviated as DPO.

Naturally, practitioners are asking:

Is RLHF still necessary?

Is DPO just a research curiosity?

What changes for teams building real systems?

Let me share how I am thinking about this shift from a practical lens.

Why RLHF Is Operationally Expensive

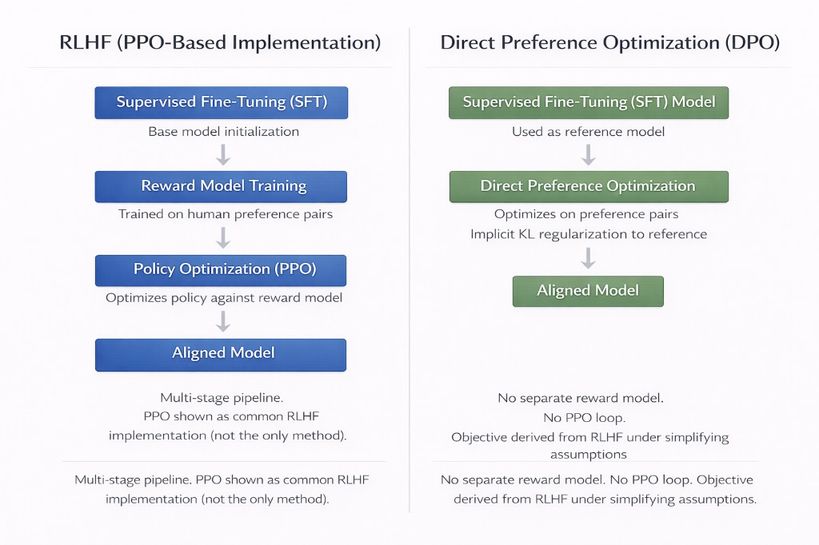

Classical RLHF pipelines typically involve three stages:

- Supervised fine tuning

- Training a reward model using human preference comparisons

- Reinforcement learning, commonly using PPO, Proximal Policy Optimization

The reinforcement stage is where complexity rises sharply.

With PPO based RLHF, you are effectively running policy optimization against a learned reward model. This introduces:

- Multiple model checkpoints

- Reward model maintenance

- Careful hyperparameter tuning

- Stability concerns during training

- Significant compute costs

Operationally, this means you are not just fine tuning a model. You are building a mini reinforcement learning research lab inside your organization.

From my experience, the most challenging part is not even the mathematics. It is the coordination overhead:

- Data labeling pipelines must be consistent

- Reward models drift as base models evolve

- Training instabilities can wipe out weeks of experimentation

- Safety regressions must be monitored continuously

For frontier labs, this complexity makes sense. For most enterprises, it is heavy.

What DPO Changes

Direct Preference Optimization reframes the problem.

Instead of explicitly training a reward model and then running PPO, DPO optimizes the model directly against preference pairs. Conceptually, it avoids the separate reward modeling stage and the reinforcement learning loop.

In practice, this brings several simplifications:

- No separate reward model to maintain

- No PPO policy optimization step

- Training resembles supervised fine tuning with a different objective

For practitioners, this is powerful.

Fewer moving parts means:

- Lower operational overhead

- Easier reproducibility

- Reduced training instability

- Simpler infrastructure requirements

That said, simplification does not mean triviality. High quality preference data is still essential. If anything, the pressure on data quality increases because there is no separate reward model to absorb noise.

Alignment vs Controllability

One nuance that often gets missed in this discussion is the difference between alignment and controllability.

RLHF, especially when implemented with large scale human feedback and careful reward shaping, can produce highly aligned behavior in open ended settings. It is powerful when you need:

- Broad behavioral shaping

- Safety guardrails across diverse prompts

- General purpose assistant behavior

DPO is strong when:

- You have clear preference signals

- You want targeted behavior shaping

- You are optimizing for specific product traits

For example, in enterprise settings where the model's scope is narrower, say legal drafting assistance or internal knowledge Q and A, DPO style fine tuning may be sufficient and operationally attractive.

If you are training a general purpose assistant exposed to unpredictable user input, more elaborate alignment pipelines may still be justified.

The Data Flywheel Challenge

Both RLHF and DPO rely heavily on high quality preference data.

This is where enterprises often underestimate the challenge.

Collecting preference pairs requires:

- Well defined annotation guidelines

- Trained reviewers

- Consistency checks

- Iterative refinement

The flywheel looks simple on slides: user interactions lead to preference collection, model update, improved outputs, and more users.

In reality, maintaining label quality at scale is hard. Bias creeps in. Annotator fatigue affects signal quality. Domain experts are expensive.

DPO lowers algorithmic complexity, but it does not remove the data problem. If anything, it makes the organization confront it more directly.

Enterprise Fine Tuning Trade Offs

From an enterprise lens, the decision often comes down to trade offs across four dimensions:

- Infrastructure complexity

- Talent requirements

- Compute cost

- Governance and safety risk

RLHF with PPO:

- Higher infrastructure complexity

- Stronger alignment potential

- Larger compute footprint

- Requires reinforcement learning expertise

DPO:

- Simpler pipeline

- Lower operational cost

- Easier integration into existing fine tuning stacks

- More sensitive to dataset quality

If you are running models inside a tightly scoped enterprise workflow, with strong guardrails and retrieval grounding, DPO can often be sufficient.

If you are deploying a broadly accessible assistant with high reputational risk, a more elaborate alignment stack may be worth the cost.

When Alignment Pipelines Are Justified

I often ask teams three questions:

- Is your model exposed to unpredictable public input?

- Is brand or regulatory risk extremely high?

- Do you need general behavioral shaping beyond task optimization?

If the answer to most of these is yes, investing in a more classical RLHF style pipeline can be justified.

If your system is domain bounded, retrieval grounded, and embedded inside internal workflows, simpler preference optimization may be more pragmatic.

Organizational Implications

The shift from RLHF to DPO is not only technical. It is organizational.

RLHF tends to centralize expertise. It requires a dedicated team with reinforcement learning depth, experimentation infrastructure, and safety evaluation frameworks.

DPO lowers the barrier for product teams to experiment with alignment. Fine tuning becomes more accessible. Iteration cycles can shorten.

However, this democratization also increases responsibility. More teams can fine tune models, which means governance frameworks must mature accordingly.

In many ways, the conversation is less about which algorithm wins and more about operational fit.

Closing Reflections

I see DPO not as a replacement for RLHF, but as an evolution in how we think about alignment engineering.

For some organizations, RLHF remains the gold standard. For many enterprises, DPO offers a compelling middle path, strong alignment improvements without the full reinforcement learning overhead.

As practitioners, our job is not to chase the most complex pipeline. It is to match the alignment method to the product risk, data maturity, and organizational readiness.

That is where the real architectural decision lies.