Model Routing and Multi Model Systems

In recent quarters, most discussions around generative AI have focused on individual large language models. Bigger models, better benchmarks, more parameters.

But in production environments, a different architectural pattern is quietly emerging:

Multi model systems with intelligent routing.

Instead of asking one model to do everything, we are beginning to design systems that decide which model should handle which task. This shift is subtle, yet it changes how we think about cost, latency, reliability, and control.

Let us explore why model routing is becoming foundational to serious AI systems.

1. Why One Model Is Not Enough

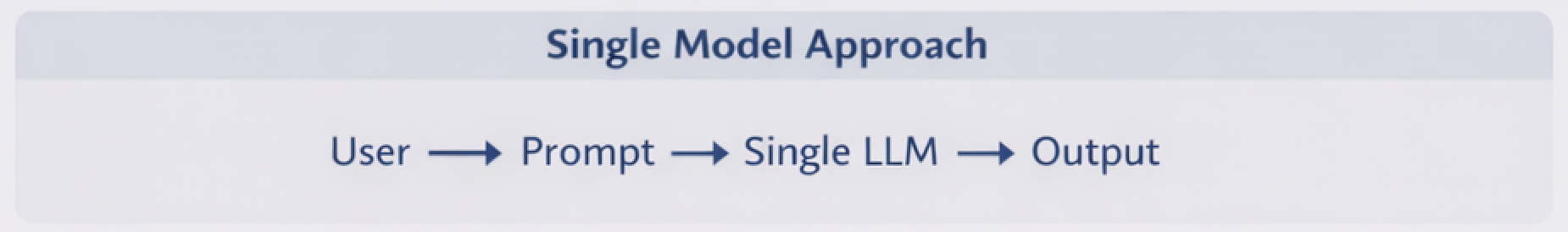

Early experiments often look like this:

This works for prototypes. It does not scale well in production.

Different tasks have different requirements:

- Some require deep reasoning

- Some require structured extraction

- Some require speed

- Some require strict cost constraints

- Some require domain specialization

No single model optimizes for all of these simultaneously.

Large frontier models provide strong reasoning but are expensive and slower. Smaller models are fast and affordable but may struggle with complexity. Domain fine tuned models can outperform general ones in narrow tasks.

The logical next step is obvious:

Use the right model for the right job.

2. What Is Model Routing?

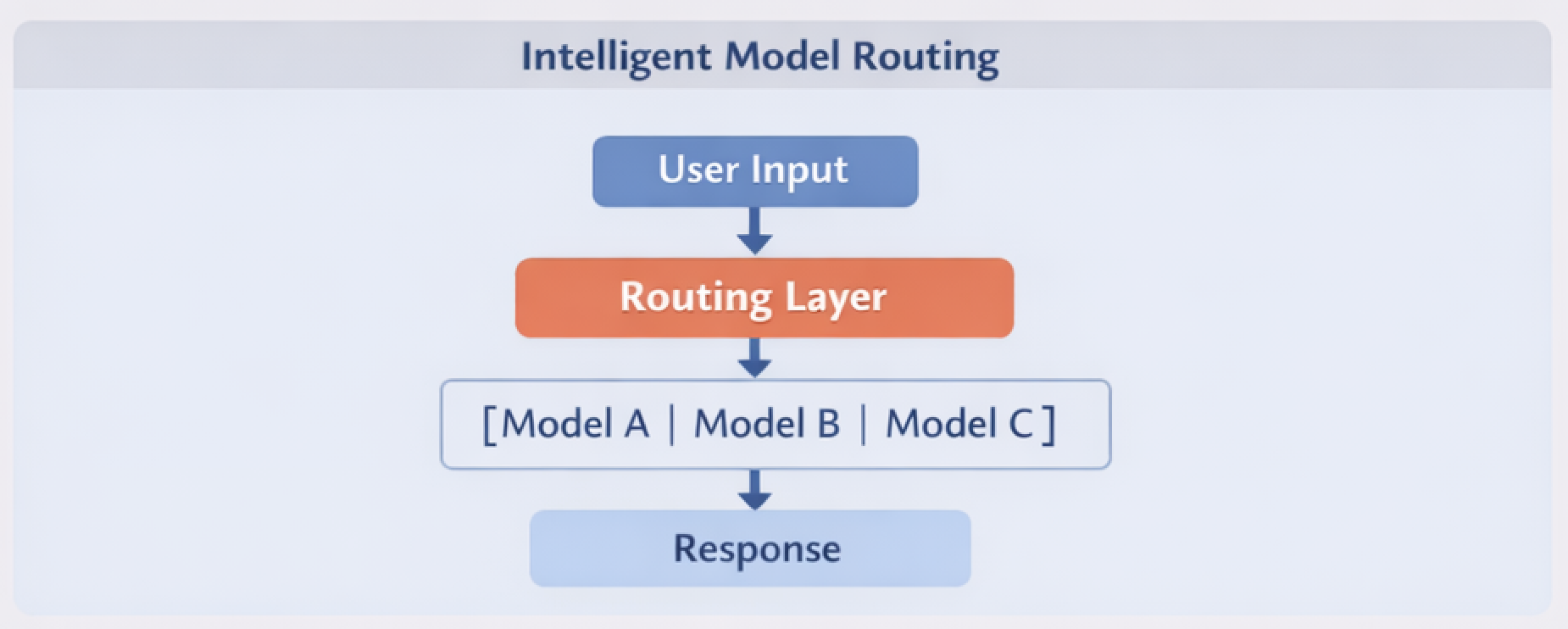

Model routing is a decision layer that selects which model should process a request based on defined criteria.

At a high level:

The routing layer becomes part of your AI architecture, not just an API call.

It can make decisions based on:

- Intent classification

- Input length

- Risk level

- Cost thresholds

- Latency requirements

- Confidence scoring

- Output validation feedback

Routing is not just a technical optimization. It is an architectural boundary.

3. Common Routing Strategies

A. Intent Based Routing

A lightweight classifier determines user intent.

For example:

- Summarization → smaller efficient model

- Complex reasoning → larger model

- Code generation → code optimized model

This pattern is computationally efficient and predictable.

B. Cost Aware Routing

You can define policies such as:

- Default to small model

- Escalate to larger model only if confidence is low

- Cap total token spend per request

This becomes critical when usage scales to millions of requests.

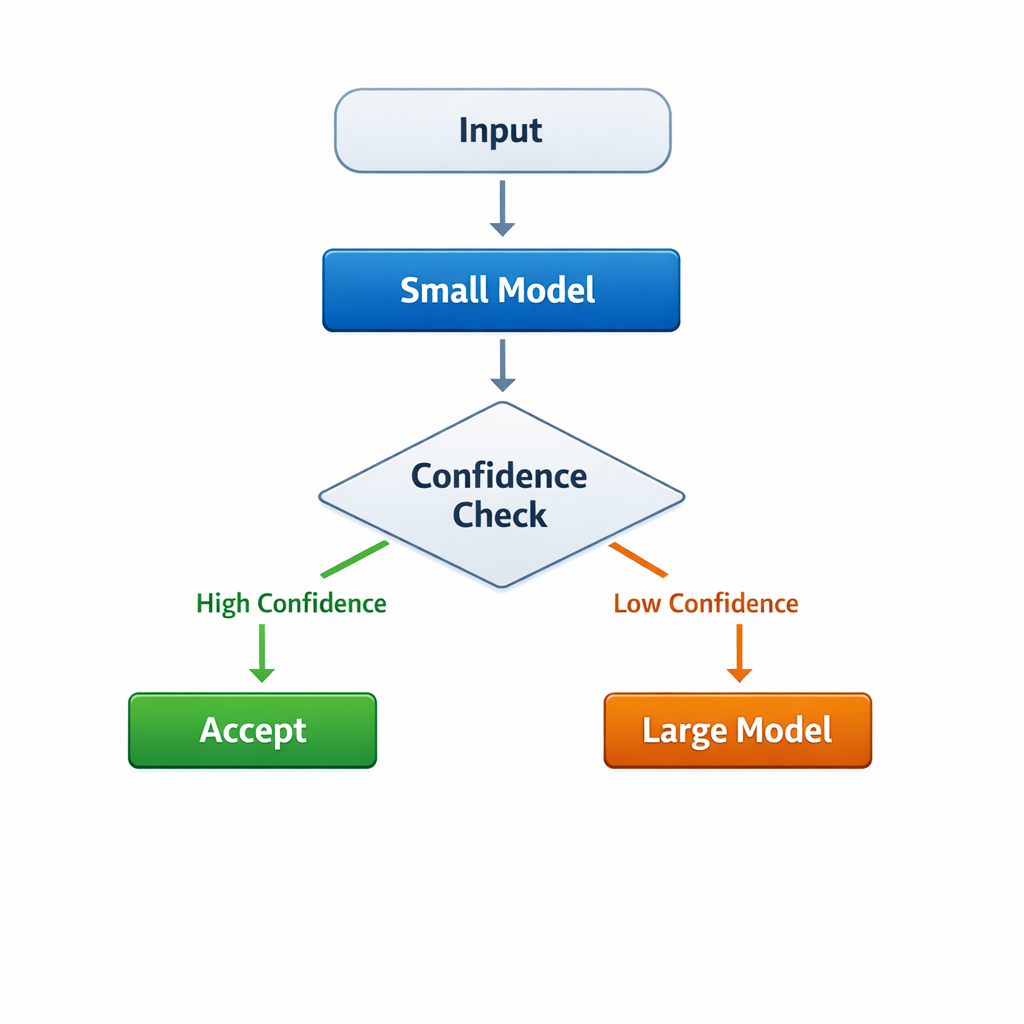

C. Confidence Based Escalation

A smaller model produces an answer. A validator scores the output. If below threshold, the request is re routed to a stronger model.

This hybrid pattern balances quality and cost.

D. Tool First, Model Second

In some systems, you route first to:

- Search systems

- Databases

- Rule engines

Only if structured systems fail do you escalate to a large model.

This prevents unnecessary token usage and improves determinism.

4. Multi Model Systems as a Reliability Pattern

Routing also improves reliability.

If one provider degrades, traffic can shift. If latency spikes, fallback models can engage. If safety filters trigger, specialized safety models can intervene.

This resembles microservices thinking in distributed systems:

- Decouple responsibilities

- Add fallback paths

- Avoid single points of failure

The orchestration layer becomes the new control plane.

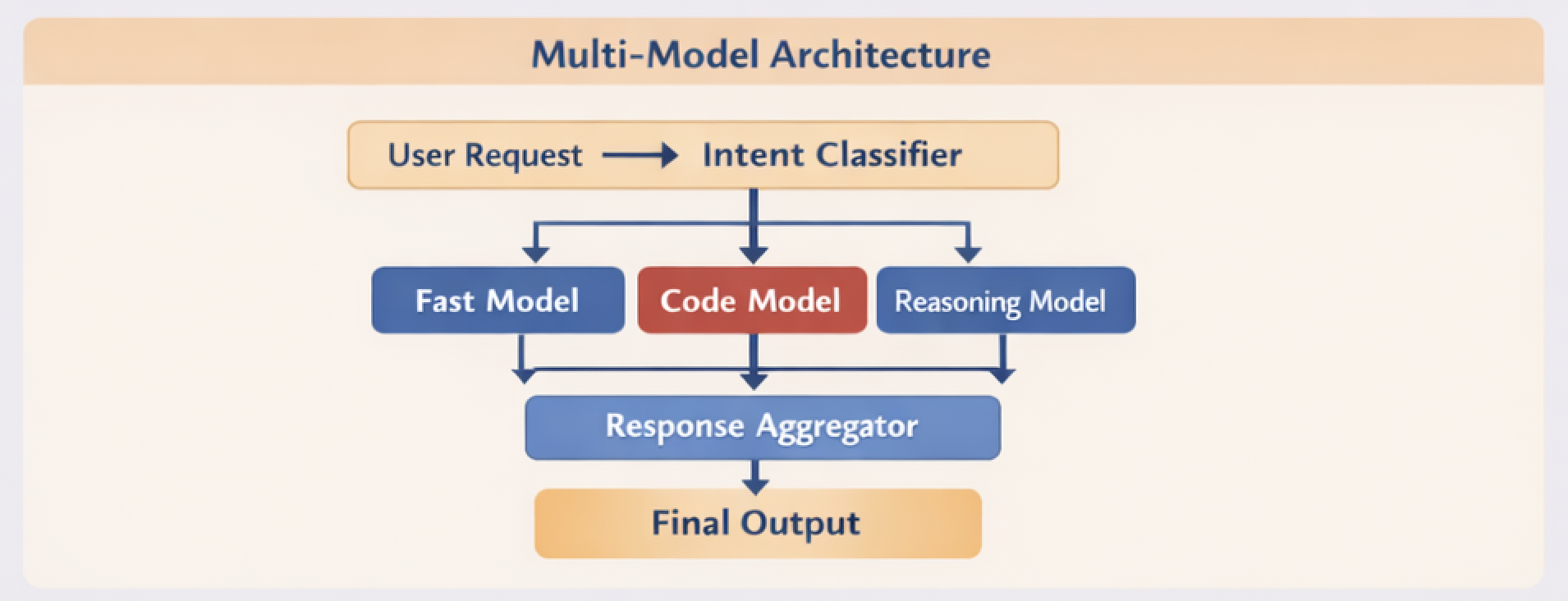

5. Architecture Example

Here is a simplified multi model architecture:

Notice that:

- Models are modular

- Routing is explicit

- Aggregation is controlled

- Observability can be attached to each branch

This design allows iterative improvement without redesigning the whole system.

6. Observability Becomes Critical

When multiple models are involved, debugging becomes harder.

You must track:

- Which model handled the request

- Token usage per branch

- Latency per model

- Escalation rates

- Failure patterns

Without telemetry, routing logic becomes invisible technical debt.

Multi model systems require:

- Structured logging

- Branch level metrics

- Cost dashboards

- Drift monitoring per model

Evaluation must operate at the system level, not just at the model level.

7. Governance and Safety Considerations

Routing decisions are policy decisions.

For example:

- High risk queries can be routed to stricter safety models

- Regulated workflows can be restricted to approved models

- Sensitive data can be limited to on premise models

Routing becomes part of compliance architecture.

This aligns closely with responsible system design principles such as those discussed in Building Responsible AI Systems, where governance, ownership, and monitoring are embedded across the lifecycle.

In multi model systems, responsibility does not disappear. It multiplies.

8. The Emerging Pattern

We are moving from:

Model centric thinking

to

System centric thinking

Instead of asking:

Which is the best model?

We should ask:

What is the best system of models for this workflow?

That mindset shift unlocks:

- Cost efficiency

- Better latency control

- Domain specialization

- Improved reliability

- Safer deployments

9. Looking Ahead

As the ecosystem matures, I expect routing layers to become first class infrastructure components, much like API gateways in cloud architectures.

Future systems will likely include:

- Adaptive routing based on real time performance

- Continuous evaluation loops

- Automatic traffic shifting based on quality metrics

- Model A B testing within production flows

Multi model systems are not about complexity for its own sake.

They are about acknowledging a simple truth:

No single model is optimal for every task.

Designing the routing layer thoughtfully may become one of the most important engineering decisions in AI system architecture.

And in many ways, it marks the transition from experimenting with models to engineering AI platforms.