The Latency Crisis: Real-Time AI vs GPU Economics

Speed used to be a competitive advantage. Now it is a contractual obligation.

In ongoing exchanges with peers working on large scale deployments, one theme keeps coming up. Latency is becoming the dominant constraint in enterprise AI systems.

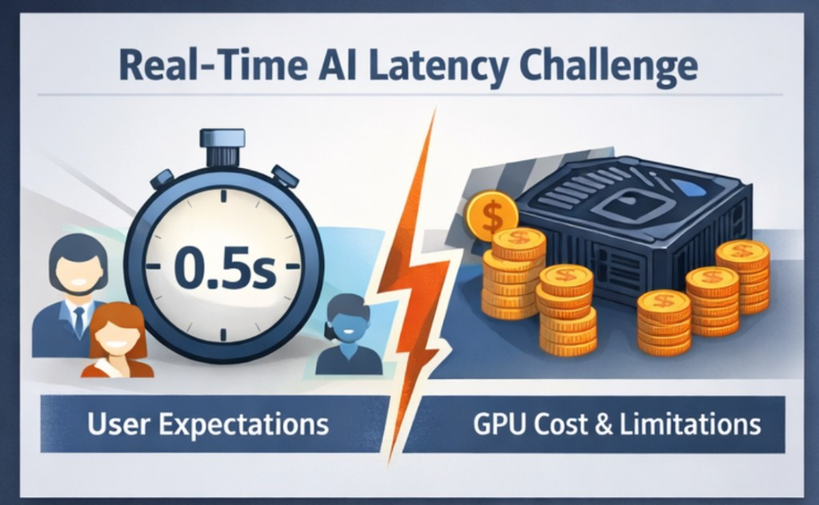

The early phase of enterprise AI adoption focused on model quality and capability. The next phase centered on integration, governance, and safety. What we are navigating now feels different. Real time user expectations are colliding directly with GPU economics.

Performance engineering is back at the center of architecture discussions.

Let us unpack why this tension is intensifying and how we can respond pragmatically.

Why Latency Budgets Are Shrinking

Enterprise AI is moving closer to the user interface.

We are embedding models inside:

- Customer support copilots within CRM systems

- Fraud detection systems with human review loops

- Developer assistants inside IDE workflows

- Voice agents operating in live sessions

In these contexts, five seconds is not acceptable. Even one second can feel slow. Sub second responsiveness is becoming the expectation.

At the same time:

- Context windows are expanding

- Prompts are richer and longer

- Retrieval pipelines introduce additional hops

- Multi step reasoning increases token generation

We are asking more from models while compressing response time. That compression is where the stress begins.

The Physics of Transformer Inference

It helps to revisit first principles.

Transformer inference cost is primarily influenced by:

- Attention complexity

- Model parameter count

- Context length

- Hardware bandwidth limits

Attention Complexity vs Context Length

Self attention scales roughly O(n²) with respect to sequence length.

If context doubles, attention computation increases more than linearly. Larger prompts mean larger key value tensors, more memory reads, and heavier intermediate activations.

Long context feels architecturally elegant. It reduces retrieval complexity and simplifies orchestration. But it introduces a quadratic cost component that cannot be ignored.

The trade off is structural, not accidental.

GPU Memory Bandwidth Constraints

In many real world inference systems, performance is memory bound rather than compute bound.

GPUs offer extraordinary FLOPs, but memory bandwidth becomes the bottleneck when:

- KV caches grow large

- Batch sizes increase

- Multiple tenants share accelerators

When bandwidth saturates, latency variance increases. Median latency may remain acceptable, yet P95 and P99 deteriorate rapidly under load.

This is where many teams encountered instability at scale. The system appears healthy until traffic spikes, then tail latency expands dramatically.

Batch Inference Trade Offs

Batching improves throughput and cost efficiency.

Processing more requests per forward pass increases utilization and reduces cost per token.

However:

- Larger batches increase queuing delay

- Tail latency grows under uneven traffic

- Deterministic response timing becomes harder

Throughput optimization and low latency are competing objectives.

Increasing batch size pushes GPU efficiency up. It also introduces waiting time for individual requests. For real time applications, this tension becomes highly visible.

What works for document summarization jobs may not work for conversational agents.

Caching as a First Class Strategy

Several teams I have spoken with are re evaluating caching as a core design primitive rather than an afterthought.

KV Cache Reuse

For conversational systems, persisting key value states across turns reduces recomputation significantly.

The benefit is faster token generation. The cost is increased memory pressure.

Under multi tenant workloads, memory fragmentation and eviction policies become critical.

Prompt Caching

When large portions of system prompts or retrieved context remain constant, caching intermediate representations reduces startup overhead.

This is particularly useful for enterprise copilots that operate over standardized policy documents.

Response Caching

For high frequency, semi deterministic queries, application layer response caching can remove inference from the critical path entirely.

The trade off is freshness and consistency.

Caching is not only a performance tool. It shapes product guarantees.

Throughput vs Determinism

Another pattern emerging in discussions is the tension between utilization and predictability.

Enterprises want:

- Stable latency

- Predictable cost per request

- Consistent user experience

Yet high GPU utilization introduces nonlinear effects. As utilization approaches saturation, small increases in traffic lead to queue buildup and rapid latency escalation.

Higher utilization leads to queue accumulation, which leads to tail latency expansion. This is classic queuing behavior applied to transformer inference.

Running GPUs at near maximum capacity optimizes cost efficiency on paper. It also increases the probability of unpredictable spikes.

Enterprise Mitigation Strategies

From collective discussions across teams, mitigation typically includes a combination of the following.

Right Sizing the Model

Not every workload requires frontier scale models.

Mid sized or distilled variants often meet quality thresholds while improving latency and cost stability.

Context Discipline

Instead of defaulting to maximum context:

- Limit top K retrieval strictly

- Summarize conversation history

- Use sliding windows intentionally

Long context should be a deliberate choice, not a default setting.

Quantization and Precision Tuning

Lower precision inference reduces memory bandwidth demand and increases effective throughput.

Careful evaluation is required to monitor quality regression, especially for reasoning heavy tasks.

Workload Isolation

Latency sensitive traffic should be isolated from batch analytical processing.

Mixing both in the same GPU pool amplifies tail risk.

Deep Observability

Measure granular metrics:

- Time to first token

- Tokens generated per second

- GPU memory utilization

- Cache hit ratios

Without visibility at the token and memory level, tuning becomes speculative.

Capacity Planning Models

AI capacity planning needs to move beyond average request rates.

A practical starting model: requests per second multiplied by average input and output tokens and model size factor, divided by effective tokens per second per GPU. Then incorporate peak multipliers, desired headroom often 30 to 40 percent, and failure isolation buffers.

Planning against average latency is insufficient. P95 and P99 targets should drive provisioning.

The difference between a responsive system and a frustrating one often lives in the tail.

Cost to Latency Optimization Framework

When evaluating architecture decisions, I find it useful to think in terms of a frontier rather than a single optimum.

Step 1: Define the latency ceiling: What is the maximum acceptable response time for the product experience?

Step 2: Define the cost boundary: What cost per thousand requests aligns with the business model?

Step 3: Explore the trade off surface: Adjust model size, batch size, context length, quantization level, and GPU class. Plot cost against latency. There will not be one perfect solution. There will be a curve representing viable options.

Step 4: Align with product intent: Internal copilots may tolerate moderate latency. Live voice systems will not. Architecture must reflect use case sensitivity.

A Forward Looking Architectural Lens

The latency crisis is not temporary. It reflects a deeper reality.

Transformer models are governed by attention complexity. GPUs are governed by memory bandwidth. Queues are governed by mathematics.

Real time AI requires discipline across all three.

Teams that treat performance engineering as a core architectural competency will build systems that are both responsive and economically sustainable.

Those who ignore the physics may find that model intelligence scales faster than system reliability.

The next phase of enterprise AI will not be defined solely by smarter models. It will be defined by how intelligently we deploy them under real world constraints.