Designing AI Systems for CFO Approval

Within the last year, AI conversations inside organizations have shifted from curiosity to commitment. Pilots are running. Prototypes are live. Product leaders are excited.

Then comes the real gate:

CFO approval.

AI systems that cannot clearly articulate financial value rarely move beyond experimentation. Technical strength alone does not secure funding. Architecture must translate into measurable economic impact, operational control, and predictable cloud expenditure.

If we want AI to scale responsibly inside enterprises, we must design systems that are defensible not only technically, but financially.

Let us explore how to do that.

1. Start with ROI Framing, Not Model Selection

Most AI initiatives begin with capability discussions:

- Which LLM

- Which vector database

- Which orchestration layer

CFOs start somewhere else:

- What measurable cost is reduced

- What measurable revenue is increased

- What risk exposure is lowered

Anchor AI proposals to one of three financial levers:

1. Efficiency Gains

Reduction in support cost, analyst time, manual processing hours.

2. Revenue Acceleration

Improved conversion rates, faster sales cycles, personalization impact.

3. Risk Reduction

Compliance automation, fraud detection improvements, error minimization.

Architecture becomes credible when expressed in financial language rather than technical vocabulary.

2. Track Cost per Query Relentlessly

AI systems introduce token based pricing, GPU variability, and usage driven cost curves. Unlike traditional fixed capacity systems, cost scales with interaction volume.

Every AI deployment should expose:

- Cost per query

- Cost per active user

- Cost per successful task completion

A practical internal formula:

Cost per Query =

(Total Model Cost + Retrieval Cost + Cloud Infrastructure Cost)

÷

Total Number of Queries

Cost per Active User =

(Total Model Cost + Retrieval Cost + Cloud Infrastructure Cost)

÷

Number of Active Users

Cost per Successful Task =

(Total Model Cost + Retrieval Cost + Cloud Infrastructure Cost)

÷

Number of Successful Task CompletionsThis metric enables forecasting, pricing alignment, and scenario modeling for scale.

When finance teams see predictable unit economics, confidence increases.

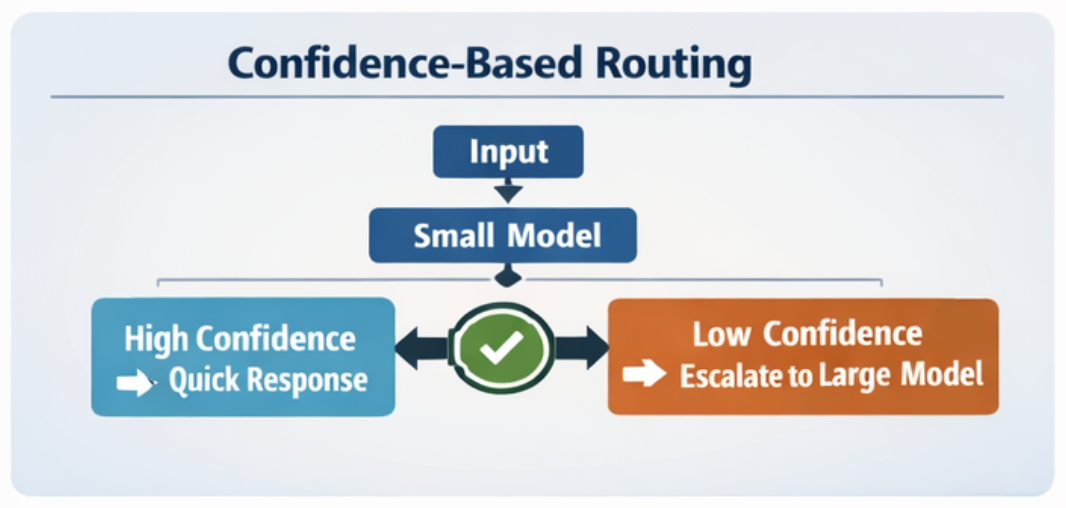

3. Confidence Routing Reduces Waste

One of the most financially responsible architectural patterns is confidence based routing.

Many routine tasks can be resolved by smaller, cheaper models deployed through lightweight cloud endpoints. Only low confidence cases escalate to more expensive models or higher tier APIs.

This reduces:

- Token spend

- GPU consumption

- API overuse

- Latency

For a CFO, this demonstrates that cost control is engineered into the system rather than managed reactively.

4. Cloud Services Strategy and Cost Discipline

Most AI systems today run on cloud infrastructure, whether fully managed APIs or self hosted models on GPU clusters.

Cloud adoption brings flexibility, but also financial exposure.

Design principles for CFO confidence:

1. Use Managed Services Where Differentiation Is Low

Managed vector databases, managed model endpoints, and serverless orchestration reduce operational overhead. They may appear more expensive per unit, but they often lower total cost of ownership when staffing and maintenance are included.

2. Monitor GPU Utilization Closely

Idle GPU instances can become silent cost drivers. Implement:

- Auto scaling

- Scheduled shutdown policies

- Utilization dashboards

3. Separate Experimentation from Production Budgets

Sandbox environments should have capped budgets and usage alerts. Production systems should have defined cost thresholds tied to revenue metrics.

4. Align Cloud Contracts with Usage Forecasts

Committed use discounts or reserved capacity can significantly lower long term cost when demand stabilizes.

Cloud flexibility is powerful. Financial governance must scale with it.

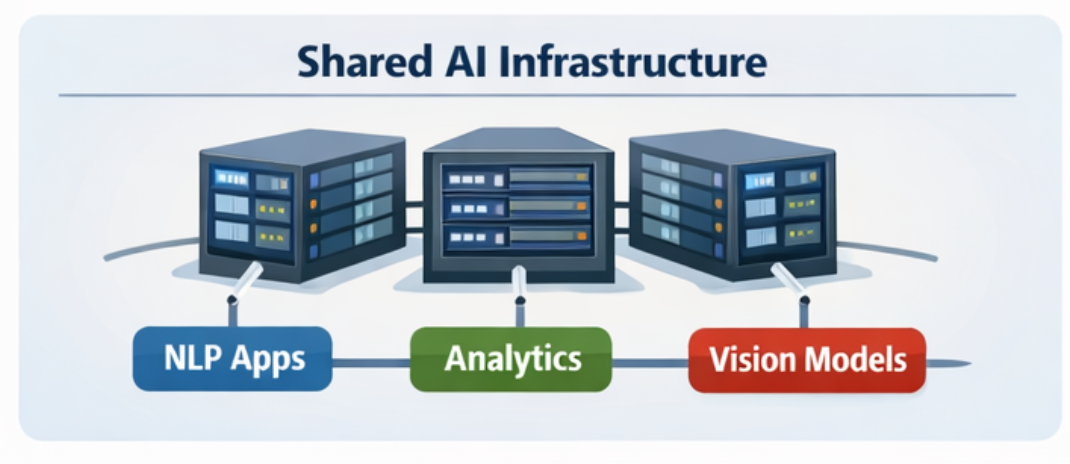

5. Infrastructure Amortization Across Cloud Workloads

AI infrastructure should not be rebuilt per product team.

A shared AI platform layer enables:

- Common model gateway

- Unified monitoring

- Shared embeddings pipeline

- Standard evaluation framework

Cloud infrastructure, especially GPU clusters or high throughput inference endpoints, should serve multiple use cases.

Amortizing infrastructure across workloads reduces marginal cost per application and improves CFO confidence in capital efficiency.

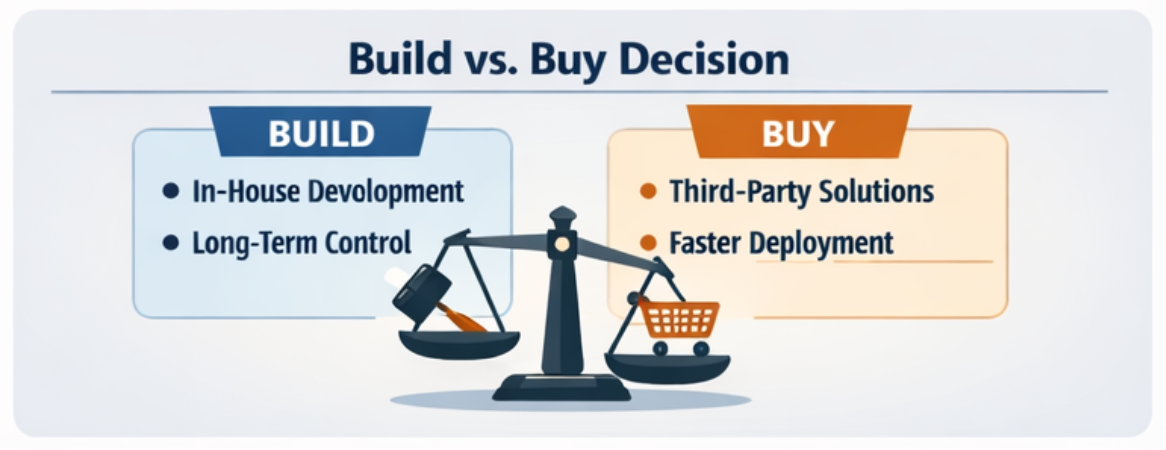

6. Build vs Buy Decisions in a Cloud Era

Cloud services complicate build vs buy decisions.

Options now include:

- Fully managed AI APIs

- Hosted open source models

- Self managed GPU clusters

- Hybrid architectures

Quantify:

- Engineering headcount cost

- Model retraining cycles

- DevOps overhead

- Vendor licensing fees

- Cloud compute volatility

Sometimes buying managed services reduces staffing cost and speeds deployment. Sometimes building offers long term margin advantage.

CFO approval accelerates when this decision is backed by transparent financial modeling rather than preference.

Final Perspective

Designing AI systems for CFO approval requires architectural maturity.

It means embedding:

- Financial metrics

- Cloud governance

- Cost visibility

- Scalable platform thinking

AI maturity is not only about model quality.

It is about unit economics, predictable cloud usage, and infrastructure discipline.

When technical design and financial clarity align, AI moves from experimental investment to strategic capability.