Designing an AI Platform Team

Over the last few quarters, many organizations have transitioned from experimenting with AI features to operationalizing them. What initially began as small, team-driven prototypes is now evolving into production-grade systems that demand reliability, governance, cost discipline, and cross-team coordination.

At this stage, one structural question keeps surfacing:

Do we need an AI platform team?

From what I am observing across enterprises, the answer is increasingly yes. Not as a control tower that slows innovation, but as an enabling layer that makes AI development repeatable, safe, and scalable.

Let me share how I think about designing such a team.

1. Why an AI Platform Team Becomes Necessary

Early AI adoption often looks like this:

- Individual product teams call external APIs directly

- Prompts live inside application code

- Model selection is ad hoc

- Evaluation is manual

- Costs are tracked at a coarse level

This works during experimentation. It does not scale across dozens of use cases.

As adoption grows, common needs emerge:

- Secure model access

- Prompt management

- Evaluation frameworks

- Cost visibility

- Data governance

- Model routing and fallback logic

Without a shared platform, every team rebuilds the same infrastructure in slightly different ways.

That fragmentation increases risk and operational cost.

An AI platform team exists to reduce this duplication.

2. The Core Responsibilities of an AI Platform Team

I see five foundational layers.

2.1 Model Access Layer

This abstracts underlying model providers. Whether teams are using APIs from providers such as OpenAI, Anthropic, or managed services from Microsoft Azure, application developers should not integrate them directly.

The platform should provide:

- A unified internal API

- Authentication and rate limiting

- Centralized logging

- Version control of model endpoints

This reduces vendor lock in risk and simplifies governance.

2.2 Prompt and Configuration Management

Prompts are no longer simple strings. They represent behavioral configuration.

The platform should provide:

- Prompt versioning

- Change tracking

- Experiment tagging

- Rollback capability

In many ways, prompts deserve the same treatment as code. In some workflows, even more discipline.

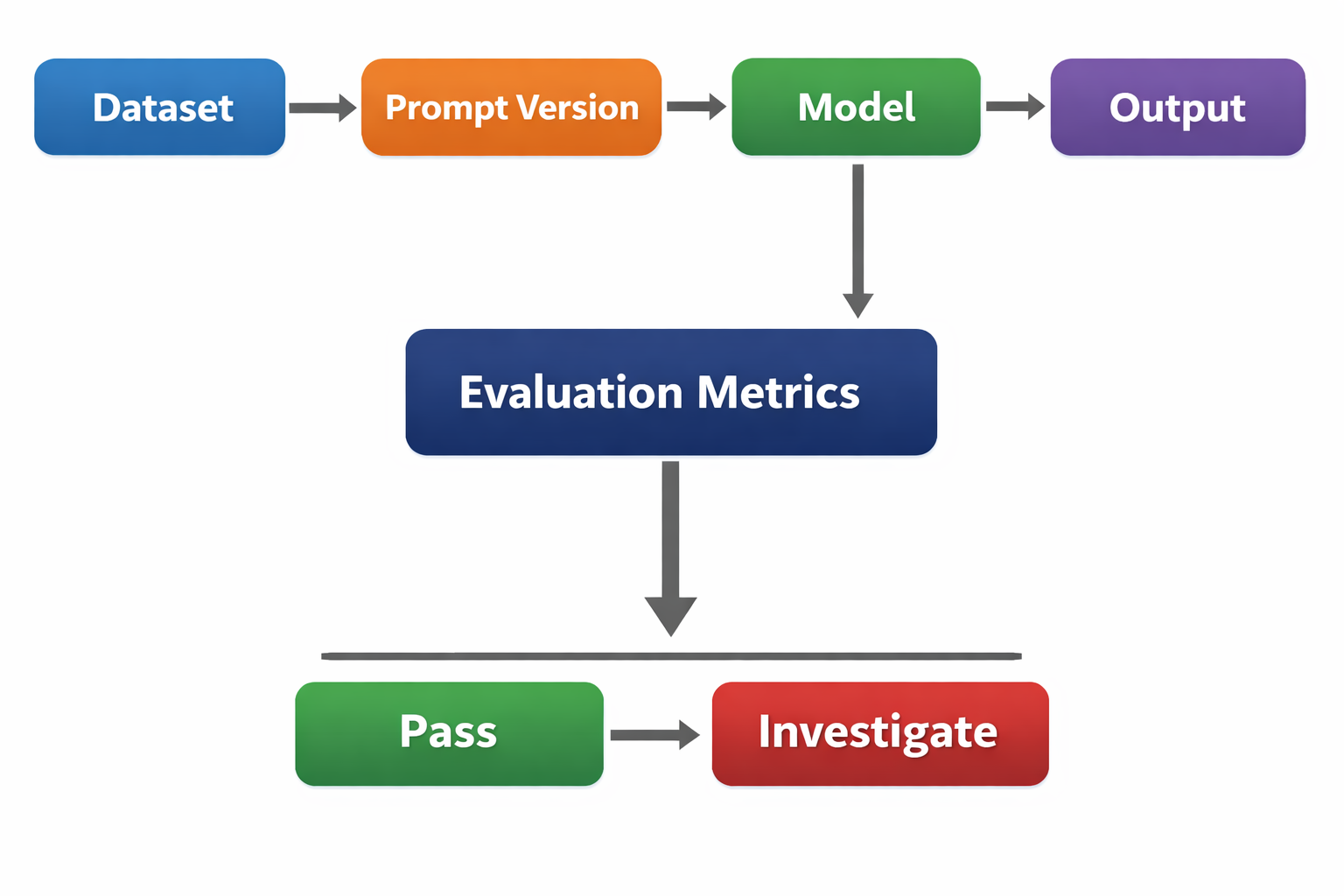

2.3 Evaluation Infrastructure

Traditional unit tests are not sufficient for generative systems.

An AI platform team should build:

- Golden datasets

- Automated evaluation pipelines

- Regression tracking

- Safety checks

- Human review workflows

This shifts evaluation from ad hoc sampling to systematic measurement.

A simple conceptual flow might look like this:

Evaluation becomes a gate, not an afterthought.

2.4 Cost and Usage Observability

AI systems introduce a new operational metric: cost per query.

The platform team should provide:

- Per application usage dashboards

- Token level cost tracking

- Latency monitoring

- Model wise cost comparison

- Budget alerts

This is particularly important when cloud providers bundle AI services within broader compute contracts.

Without visibility, experimentation can quietly become expensive.

2.5 Governance and Risk Controls

As AI systems influence user experiences and internal decisions, risk must be managed centrally.

Platform level controls should include:

- Data filtering and redaction

- Output moderation layers

- Confidence scoring and escalation logic

- Audit logs

This allows product teams to innovate within guardrails.

3. Team Structure and Skills

An AI platform team is not just ML engineers.

A healthy, production-ready composition typically includes:

- Platform engineers: Infrastructure, orchestration, CI/CD, runtime reliability

- ML engineers: Model integration, evaluation pipelines, performance optimization

- Data engineers: Data pipelines, feature stores, embedding pipelines, data quality and lineage

- Applied researchers: Experimentation, model evaluation, retrieval strategy, architecture evolution

- Security specialists: Access controls, data governance, model risk management

- FinOps or cloud cost analysts: Usage monitoring, cost allocation, efficiency optimization

- Product managers (developer experience focused): Internal tooling strategy, platform adoption, roadmap alignment

The mission is internal enablement.

In my view, the best AI platform teams operate like cloud platform teams did a decade ago. They provide paved roads. Product teams can build faster because core complexity is abstracted.

4. Centralized or Embedded?

This is where nuance matters.

The platform team should centralize infrastructure and governance. However, applied AI expertise should remain embedded within product teams.

A practical model:

- Platform team owns shared infrastructure

- Product teams own domain prompts and feature logic

- Evaluation standards are defined centrally

- Iteration happens locally

This creates alignment without bottlenecking innovation.

5. Avoiding the Control Tower Trap

One risk is turning the AI platform team into a gatekeeper that slows progress.

To avoid this:

- Provide self service APIs

- Offer clear documentation

- Publish model benchmarks internally

- Maintain transparency around costs

- Create feedback loops with product teams

The goal is acceleration, not control.

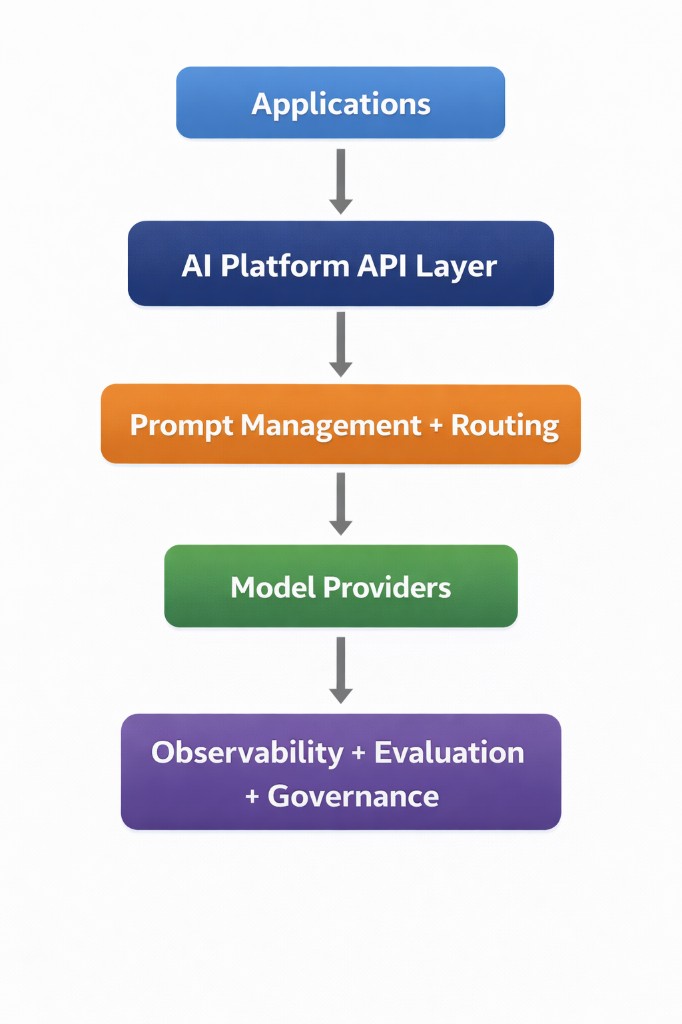

6. A Reference Architecture View

At a high level, an AI platform architecture may resemble:

Each layer isolates complexity.

Each layer introduces measurable control.

7. Signals You Need an AI Platform Team

If you see these patterns, it is time:

- Multiple teams independently integrating external AI APIs

- Inconsistent safety behavior across products

- Surprising cloud bills

- No shared evaluation standards

- Difficulty switching or testing new models

Platform thinking becomes essential once AI moves beyond experimentation.

Final Reflection

We are entering a phase where AI capabilities are impressive, but operational maturity determines success.

An AI platform team is not about centralizing power. It is about centralizing discipline.

It provides the connective tissue between experimentation and reliable production systems.

As organizations scale AI adoption, the question is no longer whether to build such a team.

The question becomes:

How early can we design it thoughtfully?

If we get this right, AI becomes not just a feature, but a sustainable capability.