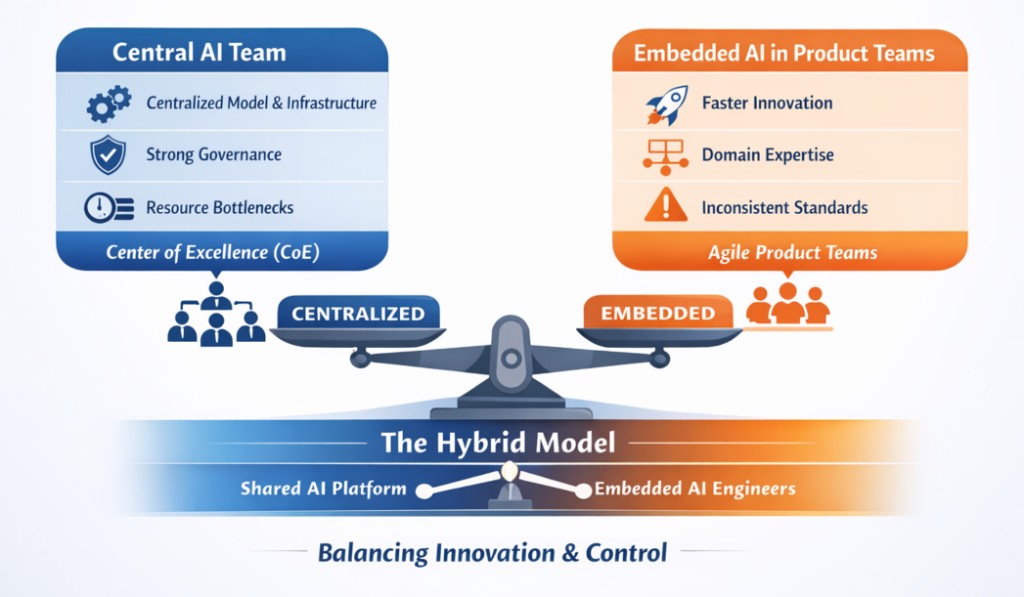

Central AI Team or Embedded AI in Product Teams?

As AI systems move from experimentation into production, one structural question keeps surfacing inside organizations:

Should AI sit in a central team, or should AI engineers be embedded directly inside product teams?

This is not just an organizational preference. It influences delivery speed, architectural coherence, governance maturity, and long-term scalability.

In this post, I want to explore the trade-offs pragmatically; not from theory, but from what we are observing as AI becomes production infrastructure.

1. The Central AI Team Model

In this structure:

- AI engineers and data scientists sit in one dedicated organization.

- They build shared infrastructure and reusable components.

- They partner with product teams as internal collaborators.

- They define evaluation standards and governance practices.

This is often referred to as a Center of Excellence.

Where It Works Well

Early capability building

When AI expertise is limited, concentrating talent accelerates learning and prevents fragmentation.

Infrastructure-heavy environments

If model hosting, evaluation frameworks, feature stores, or retrieval systems are still evolving, a centralized platform mindset reduces duplication.

Governance-sensitive domains

Consistency in bias testing, documentation, and model review becomes easier when ownership is consolidated.

Where It Struggles

- Product teams queue for AI support.

- Context gaps emerge between model builders and user-facing workflows.

- AI becomes a "service function" rather than a product capability.

The hidden cost is slower iteration.

2. The Embedded AI Model

Here, AI engineers sit directly within product squads.

- Each team owns its AI features end-to-end.

- Experimentation happens close to user feedback.

- AI becomes part of normal product development.

Where It Works Well

Speed of iteration

Prompt tuning, evaluation loops, and retrieval adjustments move faster when the decision-makers sit together.

Clear accountability

The team that defines the feature also owns its model performance.

Tighter integration

AI becomes embedded into workflows rather than layered on top.

Where It Struggles

- Infrastructure duplication appears.

- Evaluation standards diverge.

- Monitoring and safety practices vary.

- Technical debt accumulates across teams.

The hidden cost is fragmentation.

3. A Useful Distinction: Platform AI vs Product AI

As organizations mature, it becomes clear that AI operates across two layers:

AI Platform Layer

- Model hosting

- Deployment pipelines

- Evaluation frameworks

- Security controls

- Monitoring and drift detection

- Prompt and experiment tracking

AI Product Layer

- Customer-facing copilots

- Recommendation systems

- Retrieval pipelines

- Classification workflows

- Decision-support features

If product teams own both layers, inconsistency emerges. If a central team owns both layers, speed declines.

This tension naturally leads to a hybrid model.

4. The Hybrid (Hub-and-Spoke) Model

In this structure:

The Central AI Platform Team (Hub)

Owns:

- Shared infrastructure

- Deployment standards

- Evaluation tooling

- Governance patterns

- Security reviews

Embedded AI Engineers (Spokes)

Own:

- Feature design

- Prompt strategy

- Experimentation

- Customer outcomes

- Iterative improvements

This model attempts to balance:

- Velocity

- Consistency

- Reusability

- Accountability

It recognizes that AI is both infrastructure and product capability.

5. Governance Is the Deciding Multiplier

Regardless of structure, one principle consistently determines success:

Clear lifecycle ownership.

AI systems require:

- Defined evaluation metrics

- Monitoring dashboards

- Bias and safety checks

- Incident response processes

- Transparent documentation

Responsible AI cannot be an afterthought. The ideas discussed in Building Responsible AI Systems reinforce that governance must scale with deployment maturity.

Organizational design must support that discipline.

Without it, centralization becomes bureaucracy, and embedding becomes risk.

6. Practical Questions to Guide the Decision

Instead of asking which model is superior, consider:

- Is AI core to product differentiation or an enabling capability?

- How mature is our ML infrastructure?

- How regulated is our domain?

- Do product teams have sufficient AI literacy?

- Is our bottleneck speed or consistency?

The answers will shape the structure more reliably than trends will.

Closing Reflection

Organizational design for AI is not static.

Teams often centralize to build capability. Then embed to accelerate product innovation. Then reintroduce centralized governance as scale increases.

The goal is not to choose a side.

It is to design an operating model where:

- Innovation happens close to users

- Standards protect customers

- Infrastructure scales sustainably

- Accountability is unambiguous

AI is no longer an experimental layer. It is becoming core architecture. And the way we structure teams around it will define how responsibly and effectively we build the future.